¶ Edge AI Layer

On-device machine learning models running entirely on the microcontroller — no cloud, no internet required.

¶ Deployed Models

| Model | Device | Task Type | Input | Output | Status |

|---|---|---|---|---|---|

| Fire Alarm Siren Detector | Thermostat (nRF5340) | Audio classification | PDM microphone → 16 kHz PCM | Binary: alarm / no alarm | ✅ Deployed |

| Child Crying Detector | Thermostat (nRF5340) | Audio classification | PDM microphone → 16 kHz PCM | Binary: crying / no crying | 🔮 Planned |

| Dog Barking Detector | Thermostat (nRF5340) | Audio classification | PDM microphone → 16 kHz PCM | Binary: barking / no barking | 🔮 Planned |

¶ Model Architecture & Specifications

| Parameter | Fire Alarm Siren Detector |

|---|---|

| Framework | Edge Impulse SDK (wraps TFLite Micro internally) |

| Model type | Convolutional neural network (CNN) — audio classification |

| Preprocessing | Mel-frequency spectrogram (MFCC) extracted from 1 s audio window |

| Training data | 98 fire alarm recordings + 269 background noise samples |

| Quantization | INT8 post-training quantization (full integer) |

| Model size | ~30–50 KB (.tflite flatbuffer) |

| RAM usage | ~40–60 KB tensor arena (allocated from App core SRAM) |

| Inference target | nRF5340 Application core (Cortex-M33, 128 MHz) |

| Inference latency | < 100 ms per 1 s audio window |

| Power impact | Microphone + inference active only during listening window; duty-cycled to preserve battery |

¶ Compute Constraints

| Resource | nRF5340 App Core | Model Budget |

|---|---|---|

| Flash | 1 MB total | ~50 KB for model + ~20 KB for TFLite runtime |

| RAM | 512 KB total | ~60 KB tensor arena (shared with application) |

| CPU | Cortex-M33 @ 128 MHz | DSP extensions used for MFCC; no FPU dependency (INT8) |

| Accelerator | None (pure CPU inference) | Sufficient for single-model, sub-second classification |

¶ Inference Pipeline

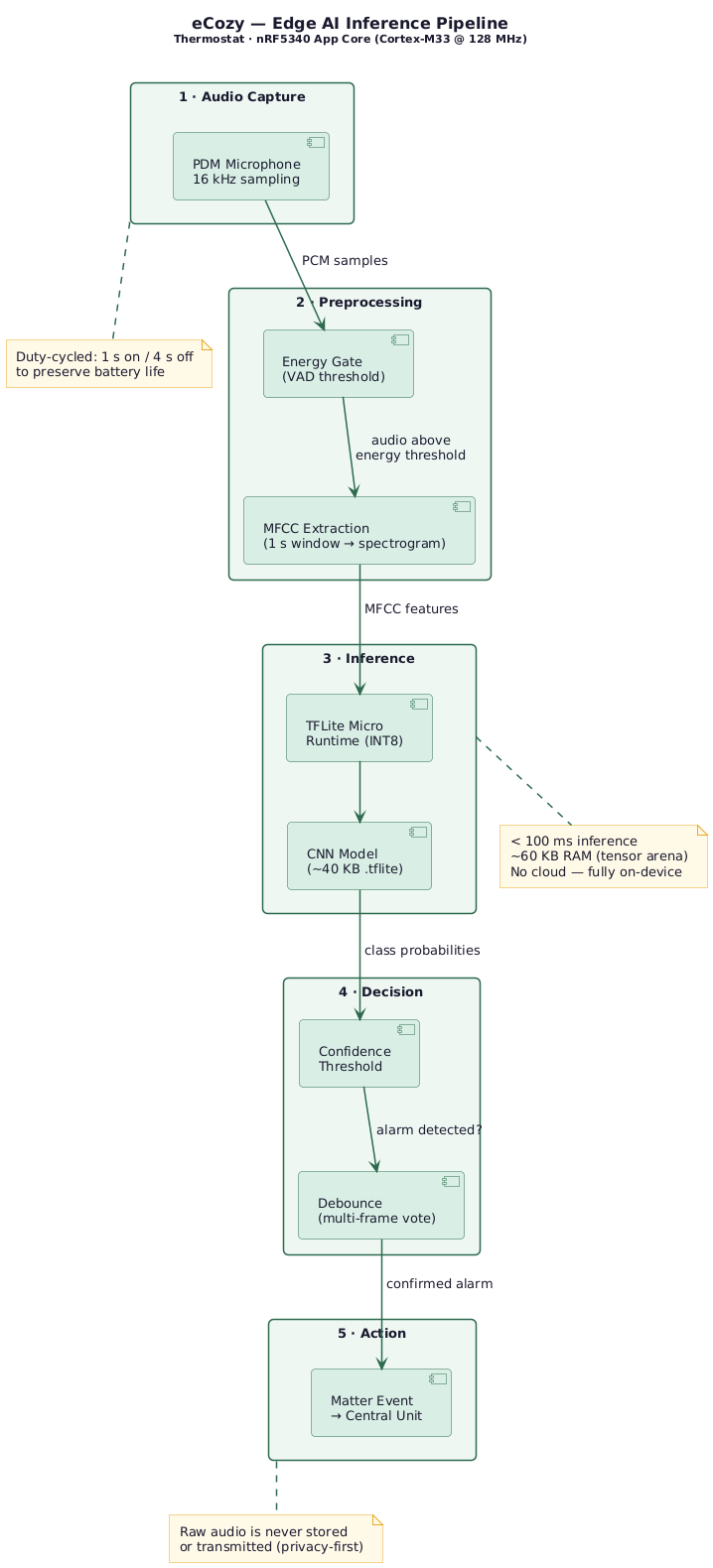

The pipeline runs entirely on the nRF5340 Application core and consists of five stages:

| Stage | Name | Description |

|---|---|---|

| 1 | Audio Capture | PDM microphone samples audio at 16 kHz. Duty-cycled (1 s on / 4 s off) to preserve battery. |

| 2 | Preprocessing | An energy gate (VAD) discards silence. If audio exceeds the threshold, a 1-second window is processed by the Edge Impulse DSP pipeline into frequency-domain features suitable for the classifier. |

| 3 | Inference | The preprocessed features are fed into a quantized INT8 classifier (~40 KB) running via the Edge Impulse runtime on the Cortex-M33. Inference completes in < 100 ms using ~60 KB of RAM. No cloud involved. |

| 4 | Decision | A single inference result with alarm class confidence > 0.8 increments an event counter. Alarm is confirmed only after 3 positive detections within a 10-second window — reducing false positives. The confirmed alarm state auto-resets after 15 seconds. |

| 5 | Action | On confirmed alarm, a Matter event is sent to the Central Unit, which can forward it to the cloud and trigger push notifications in the mobile app. Raw audio is never stored or transmitted. |

¶ Design Principles

- Fully offline — all inference runs on-device; zero data leaves the thermostat

- Privacy-first — raw audio is never stored or transmitted; only classification result is used

- Battery-aware — microphone is duty-cycled (e.g., 1 s on / 4 s off); inference only when audio energy exceeds threshold

- Updateable — TFLite model is a separate binary blob; can be updated via OTA without reflashing the full firmware

- Extensible — additional models (crying, barking, glass break) share the same pipeline; loaded sequentially into the tensor arena

¶ Roadmap

| Phase | Model | Target |

|---|---|---|

| ✅ Current | Fire alarm siren detection | Deployed — thermostat firmware |

| 🔮 Next | Child crying / dog barking detection | Additional audio classifiers |

| 🔮 Future | Predictive heating (cloud-side) | Cloud ML — user behavior + weather patterns |

| 🔮 Future | Predictive maintenance | Cloud ML — telemetry anomaly detection |

¶ Related Pages

| 🏠 End-to-end System Overview | High-level view of all system layers and how they interconnect. |

| 🔩 Hardware Layer | Device inventory, chipsets, connectivity, and power design. |

| ⚙️ Firmware / Embedded Layer | OS/RTOS, firmware architecture, OTA updates, and device security. |

| ☁️ Cloud Layer | Backend infrastructure, application architecture, and data storage. |

| 📱 Application Layer | Mobile apps, dashboards, and API integrations. |